Machine learning model increases revenues in a Mall.

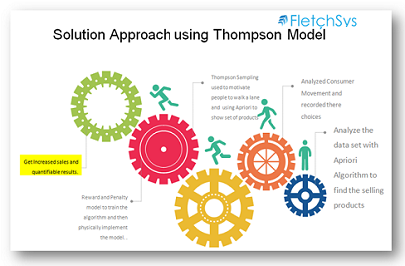

The below citation is a glimpse of our experiment using Thompson Sampling and Apriori Algorithm

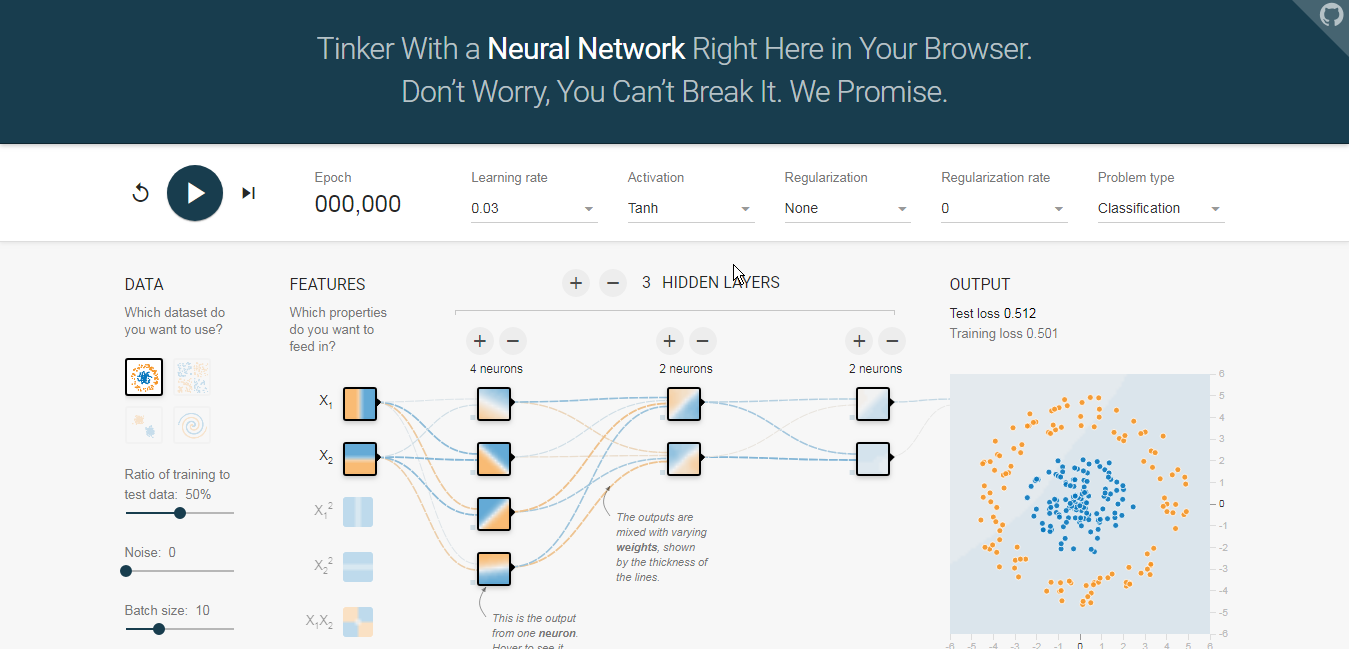

In AI, the “exploration vs. exploitation tradeoff” applies to learning calculations that need to procure new information and expand their reward in the meantime — what are alluded to as Reinforcement Learning issues. In this setting, lament is characterized as you may expect: a reduction in remuneration because of executing the learning calculation as opposed to carrying on ideally from the earliest starting point. Calculations that streamline for investigation will in general acquire more lament.

We use the multi arm bandit concept in Machine learning and applied to our data set to find out a set of people in mall their, purchasing behaviour.With combination of Apriori algorithm and Thomson sampling we determined which way the the consumers will go in a mall after purchasing a product. With data analytics and physical experimentation we found that the arrangement of certain products resulted in increased sales.

The base code that we used for making changes in our logic is provided below.

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

dataset = pd.read_csv('Malldataset.csv')

# Implementing Thompson Sampling

import random

N = 250000

d = 700

ads_selected = []

numbers_of_rewards_1 = [0] * d

numbers_of_rewards_0 = [0] * d

total_reward = 0

for n in range(0, N):

ad = 0

max_random = 0

for i in range(0, d):

random_beta = random.betavariate(numbers_of_rewards_1[i] + 1, numbers_of_rewards_0[i] + 1)

if random_beta > max_random:

max_random = random_beta

ad = i

ads_selected.append(ad)

reward = dataset.values[n, ad]

if reward == 1:

numbers_of_rewards_1[ad] = numbers_of_rewards_1[ad] + 1

else:

numbers_of_rewards_0[ad] = numbers_of_rewards_0[ad] + 1

total_reward = total_reward + reward

plt.hist(ads_selected)

plt.title('Histogram of Dataset')

plt.xlabel('Ads')

plt.ylabel('Number of users chooses a lane')

plt.show()

This paragraph provides clear idea in favor of the new visitors of blogging, that truly how to do running a blog. Lira Vite Gracie